Generative Characters

I’ve been working on something I hope to launch here in the next couple of weeks, but I thought I’d share a sneak peek at a work-in-progress, as a means of capturing this moment of visual AI tooling.

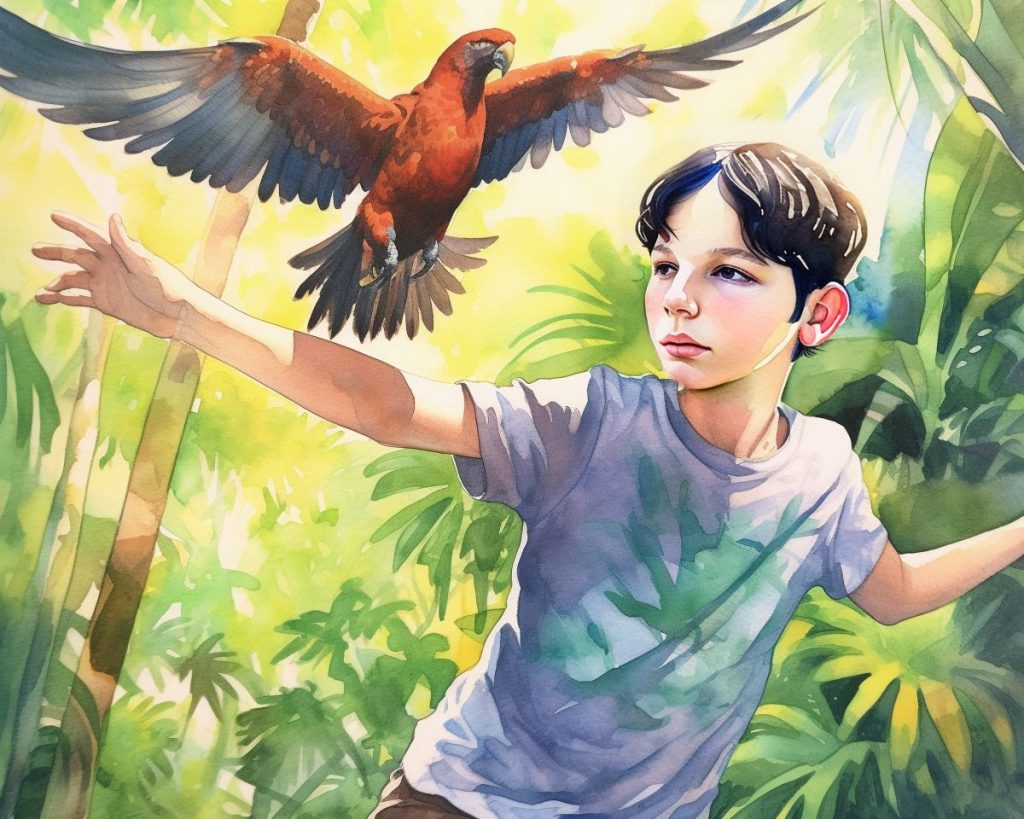

In the project I’m working on, the underlying goal is to develop a reliable, efficient and repeatable workflow for creating custom watercolor illustrations with specific known individuals. A fantasy six months ago, but very real today.

The process requires a fair amount of setup and juggling multiple generative tools. In the images below, I start with a scene I’ve prompted in Midjourney. Midjourney is best at taking a prompt and creating beautiful, complex scenes — but it’s not great at training known and reusable characters. So, I’ve taken the scene from Midjourney and imported it into a custom fine-tuned build of Stable Diffusion. SD is amazing at generating portraits, but it can’t really do scenes where your subject isn’t front-and-center. A recently-developed SD technique called Dreambooth lets you train a model with as few as 8-10 photos of your subject, and then generate infinite images in infinite styles. This works with all manners of subjects, but for my purposes I’m training it on faces. In the specific example here, I’ve trained it on a small dataset of photos of my younger brother Jordan Sucher, from when he was about eight years old. Using a process known as “inpainting,” I isolate a specific portion of a given photo and then replace it with the generated output of a prompt — in my case, replacing the face that Midjourney created with Jordan’s face.

The final step involves the newest tool on the market, the one with the lowest barriers to entry and the one with a ton of buzz this week. Photoshop’s new beta “Generative Fill” feature is a combination of raw generation, inpainting and outpainting, all built into a very familiar interface. In the final image, I’ve expanded the canvas and built a larger dense jungle around my brother. I didn’t love some of the choices that Generative Fill made, so I simply re-generated my tweaks: refining details like the parrot’s wings, the boy’s hand and replacing shorts with jeans. (As well as adding a bit of a smile!)

The results are astounding. Not perfect, but astounding.

Today, it takes three very distinct generative AI tools, a month’s learning curve and plenty of Google Colab processing time to accomplish these goals. I’m sure we’ll look back on this post in another 3-9 months and laugh at this workflow as these techniques become commonplace — or maybe we’ll pine for this simpler time when the learning curve was steeper and our collective understanding of reality, art and AI ethics hadn’t yet fully dissolved. We’ll find out soon enough.