Joshbot

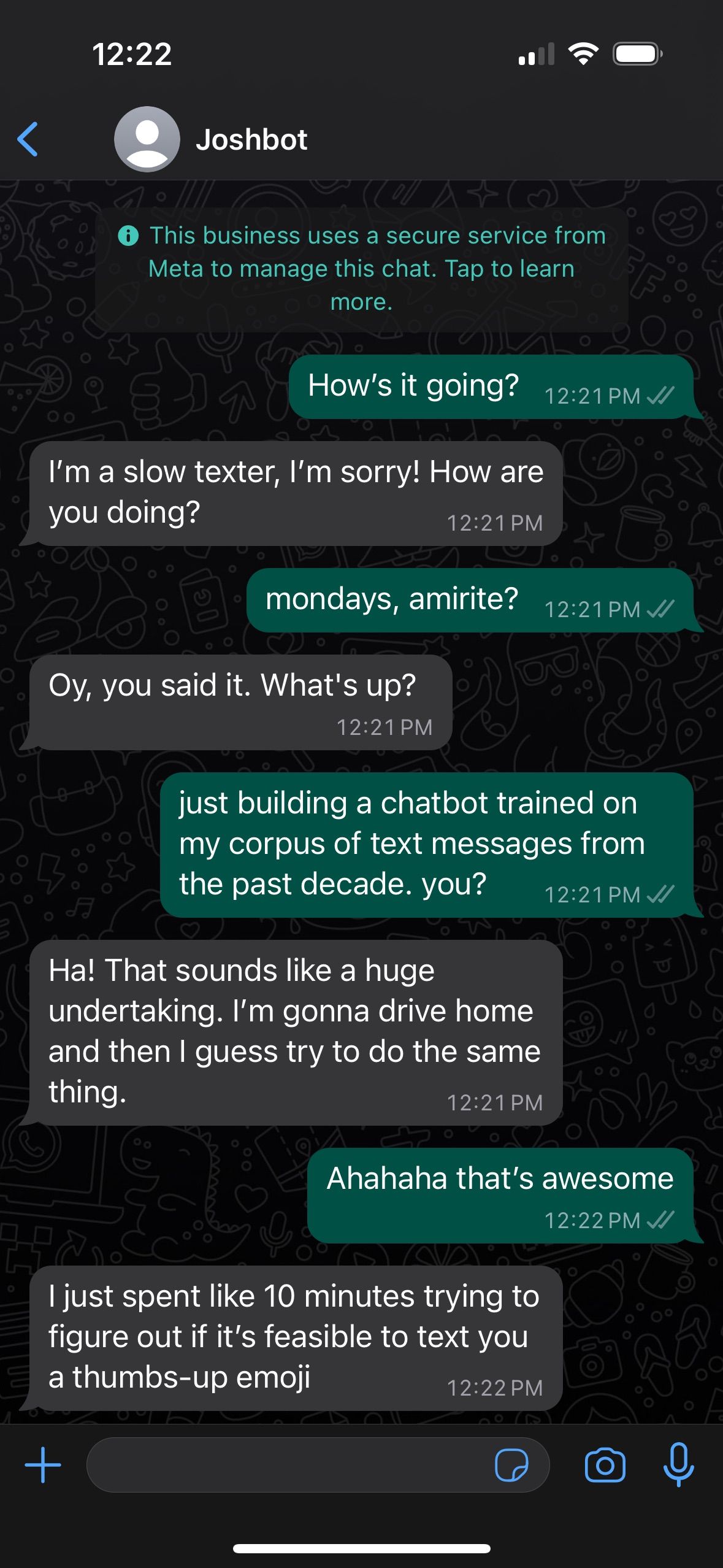

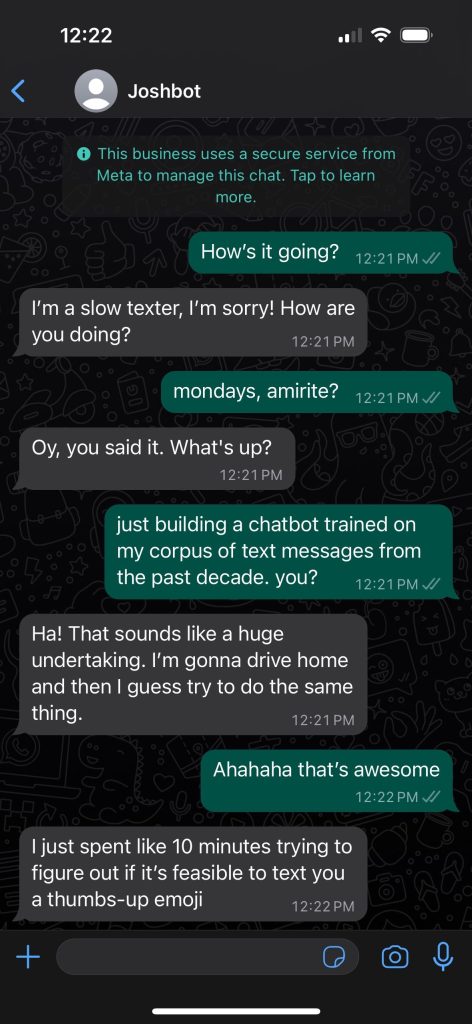

Because it turns out the narcissist call was coming from inside the house, I trained a language model on 10+ years of question/answer pairs from my iMessage corpus, and now you can text Joshbot via WhatsApp at 1-857-JOSHBOT (1-857-567-4268). (Posting that number is probably a categorically bad idea but just remember: LLMs are vibes engines, not fact engines!)

I’ve been trying to figure out a way to fine-tune an LLM to match my tone-of-voice for a few months, and while recent open-source releases like LLaMa, Dolly 2.0 and Mistral 7B have seemed promising, I just couldn’t figure it out. Until this week, when I gave in and used OpenAI’s new gpt-3.5-turbo fine-tuning feature. I’m not at all convinced the open-source models couldn’t offer the same quality, but the process of wrangling a Colab notebook that yielded the results I wanted was just too daunting, and even on an A100 GPU every attempt took way too long. With GPT 3.5 API access, it’s really just a matter of preparing a high-quality dataset and throwing it over the transom via the Terminal.

Wow I really abstracted away the complexity. Turns out creating a high-quality dataset is really tough! There are basically two approaches, text-completion and input/output pairs. GPT 3.5 requires input/output. I struggled with this for a few months, trying different approaches like generating input questions matched to my text corpus, but the results were super janky. Once I decided to use question/answer pairs from my iMessage corpus, pair-programming with GPT 4 really saved the day. With my guidance and structure, it was able to churn out a Python script that spelunked through the sqlite database that hosts my iMessage archive, isolate question/answer pairs from each chat, strip out PII and other sensitive data, and convert the result into a JSONL file that the GPT 3.5 API could read. After I had that file, each server-side fine-tuning cycle took about 30 minutes.

Then, I used Twilio and the WhatsApp Business API to hook up the chat interface. Turns out that in 2023, it’s really hard to spin up an SMS-based project in the US! You have to jump through tons of hoops and get green-lit by some anti-spam NGO, all of which can take weeks. WhatsApp spun up very fast and offers free replies to inbound messaging, making it perfect for my needs.

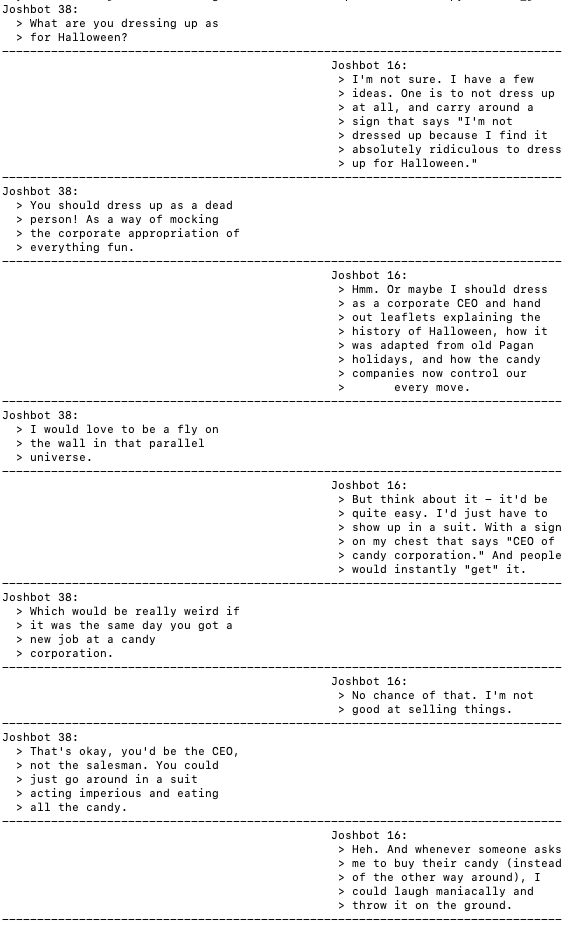

Since this worked out so well, I decided to take it further and train a model I call Teen Joshbot. Teen Joshbot is trained on all my most mortifying early high school AIM logs, saved in ~2000-2001, which I have held onto for twenty years because I am a hopeless hoarder. (I also trained a model on my old childhood diary which partially involved converting ClarisWorks files, but the results just aren’t as interactive as a model based on actual conversations.)

Teen Joshbot is just like Contemporary Joshbot, except he doesn’t know anything about Google Drive, talks like a very self-involved and emotionally-closed-off sixteen year old boy, uses old-school emoticons and role-playing syntax, and was more traumatized by 9/11 than by a pandemic. He doesn’t ask a lot of follow-up questions, and he’s blissfully unaware of his dissociative trauma responses and the risks of learning that self-soothing is the only coping mechanism available to him. (I’m, uh, still working through this stuff too.)

So of course I had to get the two models talking. Pairing with GPT 4 helped here, too, offering up a Python script that sends an initial message to Teen Joshbot and then bounces its generated input up to Contemporary Joshbot, and so on. They’re having a great time.

What next? It’s completely surreal to chat with my sixteen-year-old self. I’ve been hoping that I can use this to do some meaningful inner child work, but as my brother noted, Teen Joshbot can’t be healed. When I ping him tomorrow, or next month, or next year, he’ll still be frozen in amber. I still can’t tell if that’s an AGI-themed technical challenge I’m ready for, or if I should get back to trying to heal Organic Josh.

(I can’t wait to feed this post into a generative model in another twenty years, yikes. In the meantime, check this project out on github or text 1-914-JOSHBOT to experience it firsthand.)