Headless VCR

I’ve posted a bunch about working with retro media and hardware, especially since cleaning out my parents’ basement after recent fall flooding in NYC.

That project yielded the Brother EP-44 word processor, a vintage 1984 typewriter with a serial port that I posted about here, but that just scratches the surface of the stuff I’ve been messing with.

For example, I recently sourced a VCR so I could digitize some old tapes I found in my parents’ house. This is a wonderful and largely unexplored archive: tapes of Star Trek and Simpsons episodes I recorded in the 90s (with wonderful commercial breaks), old family footage, and a surprising amount of random promotional tapes that we saved probably just so we’d have extra tapes when we needed them. (That’s what you did in those days!)

I started digitizing all of it, and I’ve posted some on YouTube; you can check out my tapes here.

And then I started working with the new OpenAI Vision API for Joshbot and it got me thinking…

…why not create a headless VCR? What if I piped the output of my VCR directly into the serial port of my Brother EP-44 word processor? There’s something so intoxicating about hooking up GPT to that very old analog device, and I just wanted to keep exploring the boundaries of that. And I’d already put together most of the components for other projects.

So one recent Sunday I took a few hours and made it happen:

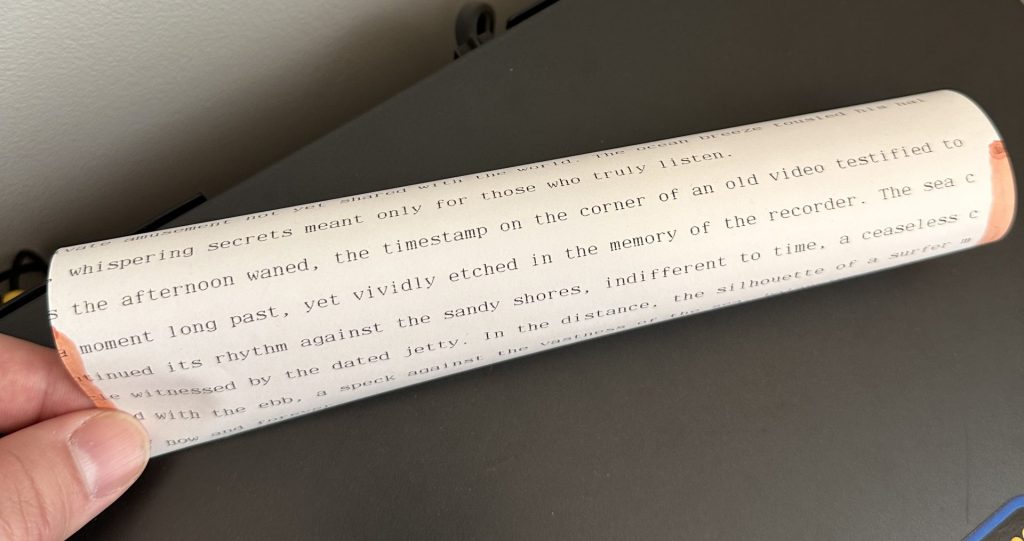

In the video above, I’m playing a 1990 tape recording of Rockaway Beach. (The source is a sublime video, which you can see here.) As the tape runs, the typewriter generates an ongoing narrative based on what it’s seeing. It’s wild.

The linchpin is an El Gato digitizer that turns my analog video streams into a webcam input on my Mac. From there, my script samples the video every 20 seconds, generating a still frame that is passed to the Vision API. The Vision API is instructed to turn what it sees into a narrative, and the conversation history provides context from earlier moments of the livestream.

I decided not to use the tape’s audio track, even though I could hook this up using the Whisper API’s near-realtime transcription functionality. I just thought it’d be more interesting to see how Vision interprets what it’s seeing without the context provided by an audio track.

I also experienced with a lot of different frame rates before landing on 1 frame per 20 seconds. It’s not perfect, but it’s a very good sweet spot that gives my API calls time to return and doesn’t cost an arm and a leg sending images for analysis at 30fps. Your eyes might need 30fps to take in video, but the frame rate required to make sense of text is a lot lower. I’m sure someone’s written a lot about the effective frame rate of a novel. The hardest part for me is figuring out a prompt that works with tapes with a lot of edits. My current script works very well for homemade camcorder footage that just sort of runs and runs, but I suspect it’d get very confused with professionally-edited tapes that jump from scene to scene. I haven’t decided how far to push this yet.

It’s also very funny when Vision decides to incorporate the camcorder’s timestamp, or the blue or black color block that appears when the tape player first starts: